Are you tired of hearing about AI yet?

It seems like this technology is all anyone ever talks about these days. But unfortunately for cybersecurity teams, you can’t really afford to ignore it.

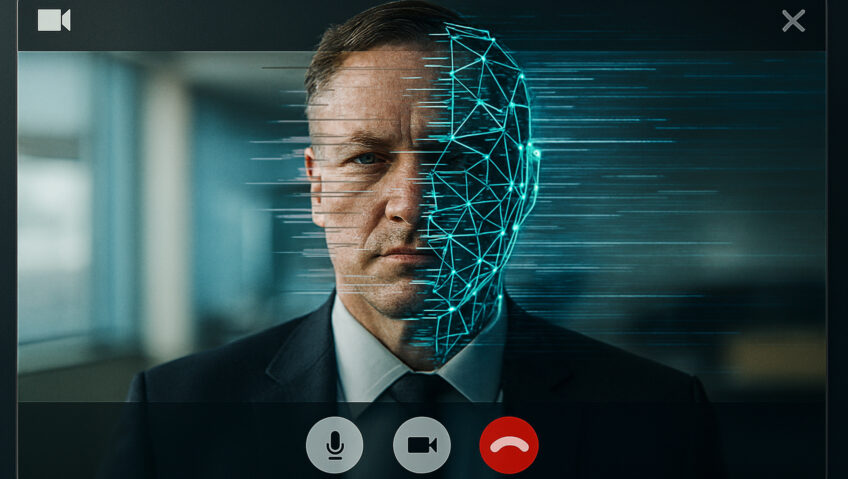

AI-driven cybercrime and fraud are skyrocketing. AI is allowing criminals to create extremely convincing deepfakes that can be used to trick even tech-savvy employees into sharing extremely sensitive data and permissions.

Deloitte has predicted that these AI-enabled fraud losses could hit about $40 billion by 2027.

It’s not hopeless, though. There’s a lot you and your teams can do to reduce your risk here, even as this technology gets more advanced.

What’s happening?

If you’ve ever experienced a good deepfake, you’ll know just how spine-chillingly believable they can be.

Criminals are using AI-generated videos to pose as trusted authorities like CEOs, clients, and finance teams… and use it to steal data and money.

Last year, an employee at a British firm joined a video call where all other participants were AI-generated. This led to a theft of about $25 million USD.

(Keep in mind, this was in early 2024, and the technology has been rapidly improving ever since.)

Closer to home, research found that 20% of Australian companies said they received a deepfake-based scam attempt between late 2023 and late 2024. And 12% fell victim to the scam.

What can you do?

It’s easy to feel disheartened and helpless, but you can improve your chances significantly. Here’s what you can do to protect your company and your people from deepfakes.

● Leverage AI-detection tools that can spot anomalies like synthesised voice patterns or giveaways in fake videos. As AI is getting more powerful, methods for detecting it are improving, too.

● Always use multi-factor authentication for any serious request. If someone (even a trusted person) asks for sensitive information, have your employee call them back on a known number.

● Invest in training and educating your staff about deepfake threats, warning signs, and response plans. Regular drills and simulations will help employees recognise suspicious cues and take action fast when things go wrong.

● Have checks and safeguards in place for financial or sensitive transactions. For example, transfers should require multiple written approvals, confirmations, and built-in time delays. Any unexpected, emotional, rushed, or unconventional request should be met with a high degree of skepticism.

About 10% of companies do nothing at all to defend against deepfakes. Don’t be one of them. The steps above are a good start, but to truly defend against AI-driven cybercrime, you need an equally disciplined and sustainable strategy.

If you’re not sure where or how to get started, we’re here to help. Get in touch with us at Aryon to find out you can defend yourself against deepfakes and keep your company safe.